The dust has now settled on the Google I/O 2024 keynote, and there’s no doubt what the big theme was – Google Gemini and new AI tools completely dominated the announcements, giving us a glimpse of where our digital lives are headed. CEO Sundar Pichai was right to describe the event as his version of The Eras Tour – specifically the “Era of Gemini” – at the very top.

Unlike previous years, the entire keynote was about Gemini and AI; in fact, Google said the latter a total of 121 times. From the unveiling of a futuristic AI assistant called “Project Astra” that could work on a phone — and maybe smart glasses, one day — to Gemini’s infusion into nearly every service or product the company offers, artificial intelligence has definitely been the dominant theme. .

The two-hour keynote was enough to melt the minds of all but the most ardent LLM enthusiasts, so we’ve broken down the top 7 things Google announced during its I/O 2024 keynote – and included the latest news on when we might actually see these new tools…

1. Google abandoned Project Astra – “AI agent” for everyday life

So it turns out Google has an answer to OpenAI’s GPT-4o and Microsoft’s CoPilot. Project Astra, dubbed an “AI agent” for everyday life, is essentially Google Lens on steroids, and it looks seriously impressive, capable of understanding, reasoning and responding to live video and audio.

Demonstrated on a Pixel phone in a recorded video, the user was seen walking around an office, providing a live feed to the rear camera and asking the Astra questions seamlessly. Gemini looked at and understood the visuals while answering the questions.

He talks about the multimodal and long context in Gemini’s backend, which works in an instant to identify and deliver a quick response. In the demo, he knew what a particular part of a speaker was and could even identify a London neighborhood. It’s also generative because it quickly created a band name for a cute puppy next to a stuffed animal (see video above).

It won’t be released right away, but developers and press like us at TechRadar will be able to try it out at I/O 2024. And until Google makes it clear, there was a teaser of Astra glasses, which could mean Google Glass could be back.

Still, even as a demo at Google I/O, it’s seriously impressive and potentially very compelling. It can power smartphones and the current assistants we have from Google and even Apple. Moreover, it also shows Google’s real ambitions for artificial intelligence, a tool that can be extremely useful and not a chore at all.

- When will it start? Unknown at this time – Google describes it as “our vision for the future of AI assistants”

2. Google Photos got a useful AI boost from Gemini

Have you ever wanted to quickly find a specific photo you took at some point in the distant past? Maybe it’s a note from a loved one, an early photo of a dog as a puppy, or even your license plate. Well, Google is making that wish a reality with a big update to Google Photos that merges it with Gemini. This gives him access to your library, allows him to search it, and easily delivers the result you’re looking for.

In a demo on stage, Sundar Pichai revealed that you can request it for your license plate and Photos will provide an image showing it and the numbers/characters that make up your number. Likewise, you can ask for photos of when your child learned to swim along with other details. This should make even the most disorganized photo libraries a little easier to search.

Google has called this feature “Ask for Photos” and will roll it out to all users in the “coming weeks.” And it will almost certainly be useful and make people who don’t use Google Photos a little jealous.

3. Your child’s homework just got a whole lot easier thanks to NotebookLM

All parents will know the horror of trying to help children with homework; if you ever knew about these things in the past, there is no way the knowledge is hiding in your brain 20 years later. But Google may have just made the task a lot easier, thanks to an upgrade to its NotebookLM note-taking app.

NotebookLM now has access to Gemini 1.5 Pro, and based on the demo given at I/O 2024, it will now be a better teacher than you. The demo showed Google’s Josh Woodward loading a notebook full of notes on a learning topic—in this case, science. With the click of a button, he was able to create a detailed study guide with additional deliverables, including quizzes and FAQs, all derived from the source material.

Impressive – but it was about to get a lot better. A new feature – still a prototype for now – was able to output all content as audio, creating essentially a podcast-style discussion. What’s more, the audio featured more than one speaker talking about the topic naturally in a way that would definitely be more helpful than a frustrated parent trying to play the role of teacher.

Woodward even managed to interrupt and ask a question, in this case “give us a basketball example” – at which point the AI switched gears and came up with clever metaphors for the topic, but in an accessible context. The parents on the TechRadar team can’t wait to try this one out.

- When will it start? Unknown at this time

4. You’ll soon be able to search Google with video

In a strange demonstration of a turntable scene, Google showed off a very impressive new search trick. You can now record a video and search it to get results and hopefully an answer.

In this case, the Google employee was wondering how to use a player; she pressed record to film the unit in question asking something and then sent it. Google used its search magic and provided an answer in text that could be read aloud. It’s a whole new way to search, like Google Lens for video, and also significantly different from the upcoming daily AI Project Astra, as this has to be recorded and then searched, rather than working in real-time.

Still, it’s part of Gemini and the generative AI infusion with Google Search aimed at keeping you on that page and making it easier to get answers. Before this video search demo, Google showed off a new generative experience for recipes and nutrition. This allows you to search for something in natural language and get recipes or even meal recommendations on the results page.

Simply, Google is going full throttle with generative AI in search, both for results and different ways to get the results.

- When will it start? Google says “video search will be available soon to US English Search Labs users” and “will expand to more regions over time”

For the past few months, we’ve been admiring the creations of OpenAI’s text-to-video tool Sora, and now Google is joining the generative video party with its new tool called Veo. Like Sora, Veo can generate one-minute videos in 1080p quality, all from a simple prompt.

This prompt can include cinematic effects, such as a time-lapse request or an aerial shot, and early samples look impressive. You don’t have to start from scratch – upload an input video with a command and Veo can edit the clip to suit your request. There is also an option to add masks and adjust certain parts of a video.

The bad news? Like Sora, Veo is not yet widely available. Google says it will be available to select creators through VideoFX, one of its experimental Labs features, “in the coming weeks.” It may be a while before we see widespread adoption, but Google has promised to bring the feature to YouTube Shorts and other apps. And that will make Adobe fidget uneasily in its AI-generated chair.

- When will it start? You can join Veo’s waiting list now, with Google saying it will be “available to select creators in a private preview on VideoFX.” Google also says that “in the future, we will add some of Veo’s capabilities to YouTube Shorts” and other products

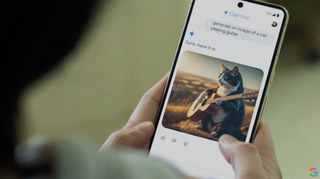

6. Android got a big Gemini infusion

Like Google’s Search Circle feature sitting on top of an app, Gemini now integrates into the Android core to integrate with your stream. As demonstrated, Gemini can now view, read and understand what’s on your phone’s screen, allowing it to predict questions about what you’re looking at.

So it can get the context of a video you’re watching, predict a summary request when you’re viewing a long PDF file, or be ready for countless questions about an app you’re in. Having content-centric AI built into a phone’s operating system isn’t bad by any means, and it can prove to be super useful.

Along with Gemini integration at the system level, Gemini Nano with multimodality will launch later this year on Pixel devices. What will enable? Well, it should speed things up, but the standout feature so far is that Gemini listens to calls and can alert you in real time if it’s spam. It’s pretty cool and builds on call verification, a long-standing feature of Pixel phones. It’s ready to be faster and do more processing on the device instead of sending it to the cloud.

- When will it start? Google says the “Gemini Nano with multimodality” will be available “on Pixel later this year.” Circle to Search improvements and a new phone call bank fraud feature will also arrive “later this year”

7. Google Workspace is about to get a lot smarter

Workspace users get a treasure trove of Gemini integrations and useful features that could make a big impact on a daily basis. Within Mail, thanks to the new sidebar on the left, you can ask Gemini to summarize all recent conversations with a colleague. The result is then summarized with bullet points highlighting the most important aspects.

Gemini on Google Meet can give you the highlights of a meeting or what other people might be asking you in the conversation. You will no longer need to take notes during this call, which can be useful if it is long. Within Google Sheets, Gemini can help you make sense of data and process requests like pulling a specific amount or set of data.

Virtual teammate “Chip” may be the most futuristic example. It can live in G-Chat and be summoned for various tasks or queries. While these tools will be coming to Workspace, likely through Labs first, the remaining question is when they will arrive for regular Gmail and Drive customers. Given Google’s approach to AI for everyone and pushing it so hard with search, it’s probably only a matter of time.

- When will it start? The Gemini sidebar in Gmail, Docs, Drive, Slides, and Sheets will be upgraded to Gemini 1.5 Pro “starting today” (May 14). For the Gmail app, the “email summarization” feature will be available to Workspace Labs users “this month” (May) and to Gemini customers for Workspace and Google One AI Premium subscribers “next month”